Fog Computing for IoT

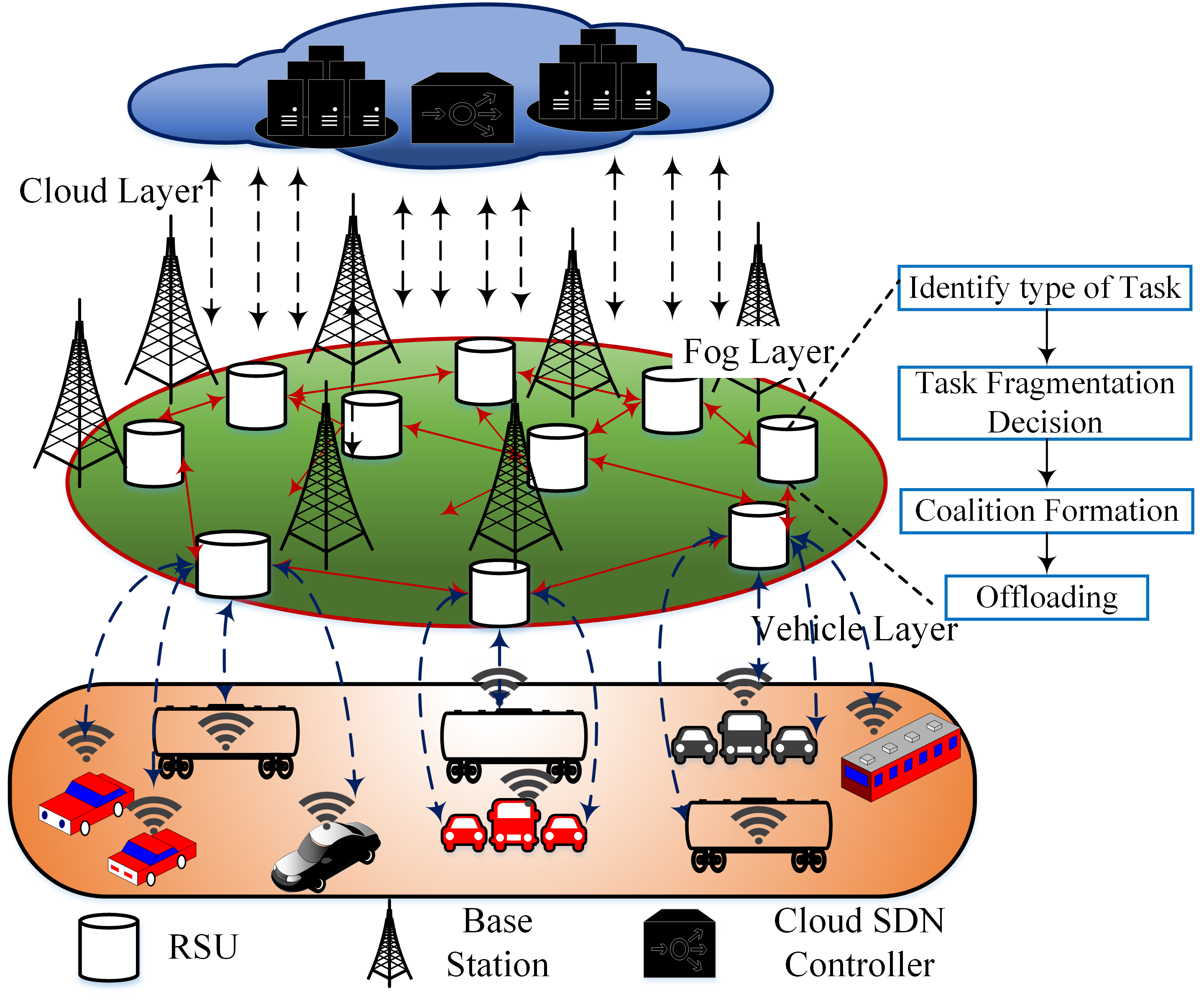

Fog computing is a paradigm that provides services to users at the edge networks. The fog computing platform is typically sandwitched between the layers of the cloud servers and the users. In a traditional network, the devices at the edge layer usually perform operations related to networking such as routers, gateways, bridges, and hubs. However, in the case of the fog-enabled environment, researchers envision these devices to be capable of performing both computational and networking operations, simultaneously. Although these fog devices are resource-constrained compared to the cloud servers, the geographical spread and the decentralized nature of the fog architecture helps in offering reliable services over a wide area. Further, with fog computing, several manufacturers and service providers offer their services at affordable rates. Another advantage of fog computing is the physical location of the devices, which are closer to the users than the cloud servers, which eventually reduces operational latency significantly.

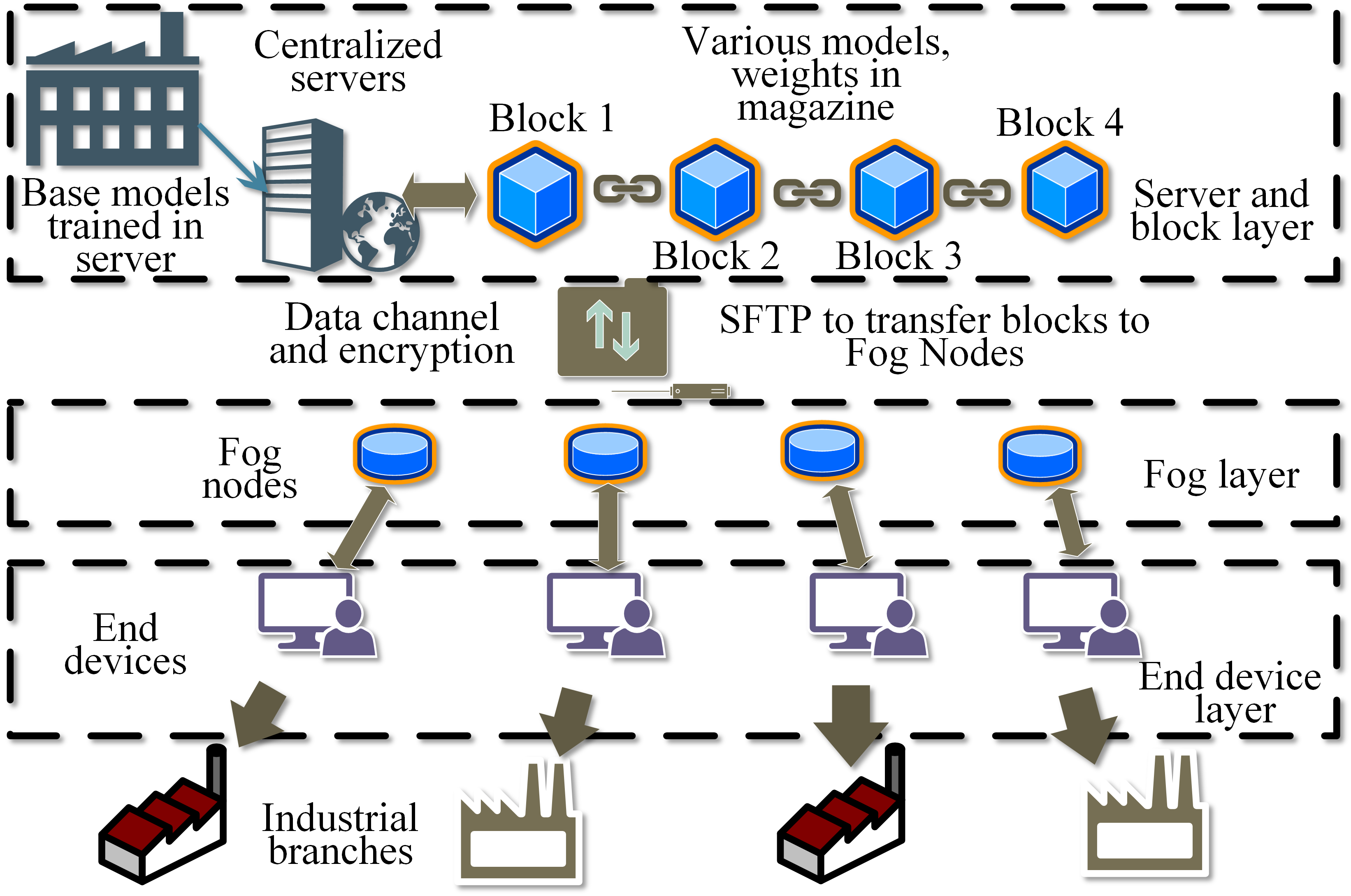

In the SWAN research group, we studied the suitability of fog computing in IoT environments and theoretically modeled its parameters to support IoT applications. We also studied its performance predominantly in IoT environments from different perspectives such as computation offloading, while reducing operational latencies and energy consumption. We also formulated models for analyzing the performance of the fog devices, while ensuring increased QoS for improved perfoamance at the user-end. Presently, we are also developing real fog-enabled platforms.